Can Link Building Give You Or Your Client A Bad Reputation?

Your inbound links are one of the most important authority signals to search engines. However, indiscriminate link-building might harm your reputation. What you should know is as follows.

In the era of internet reviews, the reputation of your brand is crucial.

Google reviews are consulted by 63.6% of customers before going to a store. And 35% of respondents cited “faith in brand” as one of the most important considerations when picking a retailer.

Reputation management has grown to be an essential component of any digital strategy since a bad review may cost you sales and a bad reputation can be devastating.

Additionally, poor backlinks can harm your reputation with search engines as well as people’s perceptions of you.

However, don’t worry; we are here to assist. In this article, we’ll examine how poor link-building may damage your reputation and offer some tips on how to maintain it pristine.

Let’s first examine how outside sources might affect the credibility and reputation of your website.

How Your Domain Authority Can Benefit from External Links?

The relationship between backlinks and search engine optimization is probably something you already know. If not, or if you want a quick reminder, read this (but come right back).

Additionally, the influence that external links have on domain authority is one way that they affect your SEO approach. This might have both good and bad effects.

A page’s PageRank could rise, for instance, if many inbound links are going to it.

As a result, Google can interpret this as evidence of the authority of your material and adjust your search engine ranking accordingly.

The rating of your domain is also improved by quality links. Depending on the website from which they come, incoming links are given varying weights by Google.

An inbound link from a respectable institution or government organization, for instance, informs the search engine that your information is legitimate, which is reflected in your rating.

Bad Reputation Building through Link Building

Sadly, life is not all rainbows, ice cream, and cute puppies with backlinks. Your rating and reputation may suffer if the inbound link is bad.

Let’s examine how your link-building strategy could harm you or your business.

1. Links in Undesirable Areas

Try not to acquire links in an undesirable area, we are constantly told. When constructing links, it could seem quite simple to stay away from poor areas, but occasionally it’s impossible to do so.

Let’s use blog comments as an illustration. You locate a blog and leave a comment there with a sly link to a website. At that point, everything is OK. But when you go back to check on your comment, you find that it has been followed by the next sentence.

When the potential customer saw these remarks, they would be reluctant to click on any link on the page because they would assume it was spam, just like the comments in question were.

Owners of WordPress blogs may take it a step further. Using spam filter tools, they can designate comments as spam if they find any that they believe to be such. By doing this, the website URL will be recorded in a database and kept out of comments on other WordPress blogs.

And as a result, if a client uses a service for link building and that service adds the client’s URL to the spam database, the customer will (often inadvertently) be prevented from leaving comments.

Imagine finding the ideal blog that the proper audience is reading and that a strategically placed remark may have a significant positive impact on your company.

Because a spammer added a tag to your remark, you can’t publish a comment with your URL, which means you lose out on the chance.

2. Using a Spam Agency or Being Identified as a Link Builder

This caution is particularly directed toward organizations that distribute impersonal, mass link requests.

The same website owners frequently receive several requests for different clients from the program that handles these sorts of link requests.

However, if the website owner becomes upset with them, they may do anything from adding the company’s details to a blog post (including the link requester’s name, agency name, and client’s name).

Webmasters who are displeased can also report companies to spam denylists.

Listed on websites like Domain Name Systems Blacklist, or DNSBL, are the agency and any clients connected to it. It doesn’t take long to go through their website to locate a list of well-known clients and agencies who work with them.

It will be difficult if your company’s whole model depends on generating links for customers and you get yourself on one of these denylists.

3. Giving the Client False Information

Alternately, if an agency receives an email from a client’s domain, that agency will now speak for the client in any correspondence or demands.

Can you imagine if you had a website and started receiving spam from your favorite business? Or if a brand you liked was spamming your blog through a person posing as a representative of the business? Your perception of the aforementioned brand would presumably fall, wouldn’t it?

4. Excessive Directory Submissions

Using web directories can help you improve your search engine rating.

Twenty years ago, they were more widespread and had a greater influence on SEO, but they still seem to be a minor ranking component now, especially for local search.

And a reliable, often sector-specific directory may be a reliable source of traffic and credibility. Of course, there are differences between different directories.

And if we’re being honest, many of them—possibly even the majority—are doing nothing—at best—or, at worst—actively harming—your ranking.

That’s because a lot of folders only contain spam.

And if we’re being honest, many of them—possibly even the majority—are doing nothing—at best—or, at worst—actively harming—your ranking.

That’s because a lot of folders only contain spam.

Search engines will lower the value of your website if you have submitted it to be listed in one of these spam sites.

Remember how I said terrible neighborhoods in the first point? It has gone through iteration.

5. Not Being a Good Community Member

People can discuss their hobbies and opinions in forums on the internet.

A URL in your signature may be used to draw attention and encourage clicks by facilitating interaction.

However, there are several ways that things may go wrong for you.

For instance, if you sign up for a forum and post the same thing in every subforum in the hopes of attracting attention, earning clicks, and enhancing your reputation.

The moderators will quickly find out what is going on and flag you as a spammer. You instantly established unfavorable links.

Damage to Your Search Evaluator Reputation

We have just covered thus far how having faulty links can get you into trouble with search engines. However, you also need to take into account another aspect of your website’s reputation: actual users’ experiences, particularly those of Google search assessors.

Your site’s external reputation is one of the factors they consider when assessing its E-A-T (expertise, trustworthiness, and authoritativeness).

These assessors keep an eye on client complaints and issues to gauge the authority of your website. They may learn more about the normal customer experience by visiting websites like Yelp, Amazon Customer Reviews, and Facebook Ratings and Reviews.

Even if Google’s algorithm has you rated well, if they deem your site has poor authority, they may flag it as a low-quality response to a search query.

How to Maintain Your Reputation?

How can you avoid developing a poor reputation when link building now that you are aware of the risks that low-quality backlinks may have on your online reputation?

Here are a few straightforward recommendations:

- Don’t spam in mass!

- Place your link somewhere than on severely spammed websites.

- Make each link request unique and make sure the link is appropriate for the website.

- Make insightful blog comments after reading blog content.

- Renounce poor connections.

Take No Shortcuts

Growing links requires effort, just as building your brand’s reputation does.

Remember that many people will discover your brand for the first time when you appear in search engine results; if you don’t appear, you won’t receive any exposure.

Therefore, please don’t be careless with your linking technique.

Be deliberate and diligent in your quest for high-quality backlinks that will boost rather than degrade your search ranking.

You may create the links you desire and preserve your reputation by exercising a little caution and making an effort.

Which is Better for You: Global or Local Websites?

Your website is one of the most effective tools you have when operating a worldwide business to connect and engage with your target market. Learn about four factors you should think about to decide if a local or a global site is better for you.

You are already aware of how varied each country’s audience is if you are operating an offline business in several different nations.

Additionally, the laws and practices governing commerce vary from nation to nation. You should also take into account these and online laws while creating a website.

Geotargeting, various search engines, and variations in each local audience are some crucial factors that site owners must always keep in mind from an international SEO perspective.

When selecting whether to have a worldwide site or distinct local sites – one for each targeted nation or language – there are still other aspects to take into account, such as maintenance costs and the accessibility of local teams to manage the sites. We have outlined four factors that show which one is preferable.

Laws and Regulations Related to Data and Privacy

It is impossible to enumerate every law and rule that must be followed to conduct business in every nation on the planet. However, the following are the two most significant sets of laws and rules that website owners should be aware of:

- Security of personal information.

- Accessibility of a website.

Each area, nation, or state is free to establish its regulations, which may take the form of a general policy, instructions, legislation, or another kind of rule. Some apply to all websites, while others just to those with a certain scope, including those in the public and government sectors.

In European Union (EU)

The General Data Protection Regulation (GDPR) of the European Union (EU) is perhaps the privacy and data protection law that receives the most attention.

It governs how people, businesses, and organizations in the EU process personal data on EU citizens.

In California

Many businesses anticipate that other states will soon approve similar privacy rules after the State of California enacted the California Consumer Privacy Act (CCPA).

As a response, several websites now display the cookie consent notice to all visitors, regardless of where they access the site from.

In Japan

The Act on the Protection of Personal Information in Japan was initially established in 2005, had a significant revision in 2016, and has been in effect since 2017. In addition to other regulations, it requires Japanese websites to disclose their privacy policies.

The information required by the Commercial Transactions Law must be posted on e-commerce websites as well.

Your Japanese website must adhere to these rules even if it is controlled in the United States, especially if you have a physical presence in Japan.

Images from the footer of Apple’s websites in the United States, United Kingdom, Japan, and China are seen above.

A page on utilizing cookies regarding GDPR is available on the UK website in addition to a usual privacy policy.

According to Chinese laws, the Chinese website displays the website registration number beneath the footer links.

Laws & Regulations Concerning Accessibility

The Americans with Disabilities Act (ADA) gained attention last month when a federal lawsuit was brought against Taco Bell. Even though it was against the restaurant, this caught the attention of numerous website owners.

There are already laws and rules governing IT accessibility for federal agencies in the United States as well as several standards and guidelines to be taken into account generally, such as the Information and Communication Technology Standards and Guidelines.

Websites are covered by the ADA in both the public and private sectors. Numerous changes to websites’ accessibility will enhance everyone’s experience with them, not just those with impairments.

Accessibility to web material is frequently a requirement in many nations and areas, including Canada, China, the EU, Japan, and the United Kingdom.

Each nation has distinct standards for accessibility, just as there are diverse data and privacy laws and regulations.

Keeping up with these ever-evolving criteria is becoming an increasingly difficult burden for website owners, especially for those who operate globally. Failure to follow them may result in financial loss and damage to your brand’s reputation.

Local Market Trends & Rivals

Since I frequently work with websites aimed at the Asian market, I can generally identify by the design and content whether a website belongs to a local business or is the local branch of a larger corporation.

The degree to which they comprehend the local market and the target audience determines the difference instead of the design talent.

Comparing the designs of the two websites is the simplest approach to demonstrate this difference. Other obvious clues of the location of the site’s creation include the design, color palette, and graphics.

Different countries have different expectations for how orders should be paid for on e-commerce sites. Another distinction between nations is their exchange and return policies.

Even while these variations don’t affect the entire website, they may lead users to leave their shopping carts.

The content of websites also reflects the variations in local interests. While local competitor websites have material created to meet the unique interests of the local audiences, global sites frequently contain content selected by the HQ nation.

The worldwide website may lose out on significant commercial opportunities if it is unable to fulfill local searchers’ needs.

Poorly localized material that is not specifically prepared for local audiences won’t be competitive in the search results as Google develops the algorithms to offer the best content for each searcher.

Multiple local websites versus one global website

Create a list of regulatory requirements that must be met from all relevant nations and apply them regardless of the target country if you have global sites under one domain utilizing the same webpage templates for all national websites.

Even though it looks like a huge undertaking if you have a smaller team or don’t have a team in every location, this is the ideal way for you to cover all your bases.

Given that rules and regulations are frequently revised, having someone in charge of studying and staying current on them would be beneficial in this situation.

If the following apply, you might want to think about developing distinct websites for each target nation:

- The website is overseen by a sizable crew in each nation.

- sufficient funding to support it

It would provide you more freedom, be better compliant, and be suitably built for regional audiences even if you divided the sites by geographic areas with comparable laws and regulations or user and cultural trends.

For instance, it is probably simpler to manage the website design and content for a specific audience in each nation in the EU rather than creating various country and language sites inside the EU under one domain set up for the EU market.

The nations of Central and South America might be another potential market for a single domain hosting several country websites.

Many businesses that view China as one of their key markets may find it beneficial to develop a separate Chinese website in light of the diverse characteristics of the Chinese market, including Baidu’s capabilities and algorithms, connection speed, website registration guidelines, and cybersecurity laws (also known as the “Great Firewall of China”).

You can host a specific website there to boost the download speed when you have one.

With a website registered with the Chinese government and offering material tailored exclusively for the Chinese market, getting a ccTLD is simpler.

Final Remarks

Many more alternatives are available, and it is flexible to adhere to regional laws and rules and represent regional interests in the content and website design when each target nation has its website.

These are excellent for geotargeting in SEO, which is a major problem for many international website owners. It does, however, come with higher overhead expenses.

Do you know Author Authority a Google Ranking Factor?

The idea of author authority has been around for a while. But how does it affect website rankings? Let’s look more closely. Consider that you are experiencing a minor medical issue. Your jaw may make an audible clicking sound behind your molars each time you eat. Although it isn’t painful, it is uncomfortable. You search that all-purpose information bank, the internet, for a solution to this unpleasant issue.

Which website, written by an ear, nose, and throat doctor with 10 years of medical experience, or the one written by a man who runs a Minecraft blog, do you believe is a more trustworthy source as you browse the search engine results?

It’s a clear decision. That is not to argue that the information on the Minecraft blogger’s page is inaccurate. Even yet, it’s doubtful that he is more knowledgeable about your condition than a healthcare practitioner with a medical degree, five years of residency training, and ten years of relevant experience.

It is certain that credibility counts. And there has never been a time when this is more true than now, when false information is easily accessible online.

And even while the majority of authors truly want to help, there is a lot of information online that is simply dangerous. Whether the incorrect information was intentionally spread or was simply a mistake, it may still be very harmful.

The Claim: Page Rankings Are Influenced by Author Authority

Google places a strong emphasis on the letters E-A-T when evaluating a webpage’s overall quality and how effectively it responds to a search query. That entails knowledge, authority, and reliability.

But does this also contain the author’s E-A-T? Does it matter whether the author of the essay is a seasoned professional as opposed to a new journalism graduate?

The idea of author authority has been around for a while. And SEO specialists and digital marketers have long disagreed over what impact it plays in site rankings.

Let’s look more closely.

Author Authority and SERP Ranking As Proof

Google has never said that the author of an article has any direct impact on rankings. Even so, it doesn’t imply you should disregard it.

There is evidence that the dominant search engine is motivated by author identification.

Google applied for a patent for Agent Rank way back in 2005, which is aeon in SEO years. It allowed the search engine to rank articles by reputation using digital signatures, which was intended to help screen out low-quality information.

Google officially endorsed authorship markup using rel=”author” in 2011. However, there was a delayed uptake of this tag. Only 30% of authors were utilizing this tag, according to a 2014 research, and Google formally discontinued it that same year.

Gary Illyes, a Google Webmaster Trends Analyst, stated during the 2016 SMX conference that while the firm does not use authorship, it does have methods in place to identify the author of a piece of content. This appears to be a reference to the function that writers have in Google’s Knowledge Graph.

If the Knowledge Graph is new to you, it is a vast collection of facts and entities (i.e., things or concepts that are singular, unique, well-defined, and distinguishable). Google does formally acknowledge authors, even if it does not have a comprehensive list of content producers.

Author reputation is important, but it’s important to avoid confusing reputation with knowledge and authority.

The Search Quality Raters Guidelines, a collection of guiding principles used to instruct human raters who assess the quality of the search engine and occasionally test suggested improvements to search algorithms, decide reputation.

According to one of these rules, a low content creator score is sufficient to assign the work a poor quality score. But Google has always been open about the fact that these ratings are never used to influence search results.

Google submitted a patent for Author Vectors in March 2020, which enables it to determine who produced unlabeled information. By assessing writing styles, degrees of skill, and interest in various topics, it does this.

How SEO Professionals Predict & Correct Demand Shifts Using Search Data.

Step No.1 Seasonality Trends + Vertical-Specific Data Helps You See Demand Shifts Faster

Aside from the regular seasonal events that occur at certain times of the year or the well-known international holidays for the e-commerce industry, each vertical has its characteristics, some of which are less obvious when working with B2B stakeholders.

By demonstrating an understanding of these search information nuances, you establish credibility in your consulting abilities and become more than just a member of the team carrying out SEO tasks. So that you can concentrate on the next strategic move, you must have a tool that makes seasonality monitoring effective.

When concentrated key phrases are approaching peak season or are out of season, SEO monitor’s automatic labels let you know to plan content ahead of time. But it’s all a matter of how all key information factors come collectively.

Step No. 2 Create SEO Keyword Categories & Easily See Which Sectors Are Fluctuating

The correct level of granularity may also be required to ensure that the information yields the desired results. This is straightforward and environmentally friendly since the corporate logic is included in the data structure of the website.

Additionally, the ability to keep an eye on specific precedence classes and their part-opponents allows for earlier gap recognition and faster decision-making.

The use of SEO monitor’s rank tracker, which provides daily ranking data for desktop and mobile devices, as well as a key term grouping mechanism that enables them to preserve the class hierarchy.

It can easily identify which locations are experiencing maintained, increased, or decreasing demand using search information because all of the classes have been accurately segmented.

How does that look?

“Let’s look at the Cocktail Glasses class instance. You saw that visibility had improved overall. But the number of visitors from nature has significantly decreased.

With a tool to provide all pertinent data in a single view, the staff could use this during client meetings to quickly update them on market changes while providing explanations for why traffic wasn’t where they had expected it to be.

Step 3: Use Annotations, Tags, and Transparency to Quickly Discover What Happened & When

For B2B, where the hospitality industry was about to enter a full summer season, you could also leverage development data across all categories to evaluate which product lines are in demand more, quickly shifting strategy to focus on these hot areas to maximize exposure.

Utilize Annotations to Increase Effectiveness

Using annotations to streamline the marketing campaign management process is also a superlative recommendation. It is best to think about improving the annotation performance in each tool in your SEO stack since you:

- Exactly recall what happened and when.

- Analyze the effectiveness of search engine optimization in comparison to external factors impacting the target.

- Explain the reasoning to your customers and be proactive about any necessary course corrections.

Bonus Step for SEO Agencies: Handle Your Customers’ Expectations Consistently

The search engine optimization team can be trusted to steer the business in the right direction because of their level of agility and openness.

However, it also implies that you have to be honest and disclose every step along the process. Even if there are reductions in traffic and the SEO marketing effort seems to be struggling.

You can easily identify and address changes in demand for search engine optimization with the help of an SEO monitor.

It’s not enough to look at search numbers in isolation because demand changes occur in shorter loops, especially when working with E-commerce stakeholders.

Making the right decisions and constantly changing search engine optimization efforts requires taking into account yearly search trends, keeping a close watch on seasonality, and understanding how the market changes. After all, it’s also important to comprehend the client’s business, its advantages, and how the competition looks for each category of services or goods. You should have the appropriate level of detail to distinguish between performance issues and market problems.

With all of the aforementioned factors in mind, SEO monitor created a rank tracker to aid search engine optimization specialists in growing their businesses. You may achieve: Daily rankings for desktop and mobile are included in the same price. Monitoring all rivals leaves you with the decision of which ones you are all in favor of. Three categories of keyword grouping make handling complex campaigns simple. You may invite both your personnel and your clients to the platform because there are no restrictions on the number of users.

These are just a few of the solutions that enable clients to concentrate on business expansion. If you have further ambiguity you may consult with me. I will help you to increase the trust and transparency of the search engine optimization industry.

Tips & Best Practices for Monitoring the Performance & Health of Websites.

Several factors affect the health and functionality of websites. Check out this guide’s suggestions for improvement if your website is running slowly.

A website or e-commerce project may be set up and managed successfully, but the job is far from over once the site is up. Your website will suffer without proper health and performance monitoring, and those effects may go well beyond just a delayed load time. Did you know that you will improve the situation in addition to increasing user satisfaction?

In addition to having effects on those who design or use them, poorly functioning websites also leave a higher carbon imprint. The typical internet page generates 0.5 grams of carbon dioxide each visit, according to the Website Carbon Calculator, which calculates the carbon footprint of websites.

That amount increases to 0.9 grams when taking a look at the mean, which also takes into account highly polluting websites. In addition to the problems on a worldwide scale, a poorly functioning website will cost you time, money, and income. Similar to our health, a website is simple to neglect and challenging to improve.

To perform suitable monitoring procedures and speed up processing, you must be aware of the key elements that contribute to the overall health of a website. Making websites is now simpler and quicker thanks to the development of website builders. All you have to do is register, select a domain, select a template, and presto! Within seconds, you have a website.

Many website owners, however, ignore the reality that building a website is simply the first step. Additionally essential are appropriate performance upkeep and health surveillance. On that topic, let’s examine some crucial website performance and health metrics, including what they are, how to track them, and how to make changes.

Factors to Monitor for a High Website Health Score

Core Web Vitals:

Google Page Speed Insights

Your Core Web Vitals should be the first metrics you take into account while performing performance testing. These performance indicators demonstrate responsiveness, stability, and speed, enabling you to assess the effectiveness of your website’s user experience.

Various programs track your Core Web Vitals, but Google Page Speed Insights is a popular choice among website owners. You will be reported for putting your URL into the tool that will indicate if you passed the Core Web Vitals test and any additional factors you need to pay attention to. The three main metrics you will notice are as follows:

Largest Contentful Paint (LCP)

Aim for a time of no more than 2.5 seconds.

If your score is greater than 2.5 seconds, this may mean that your server is running slowly, that resource load times are inadequate, that you have a large amount of render-blocking JavaScript and CSS, or that client-side rendering is taking too long to complete.

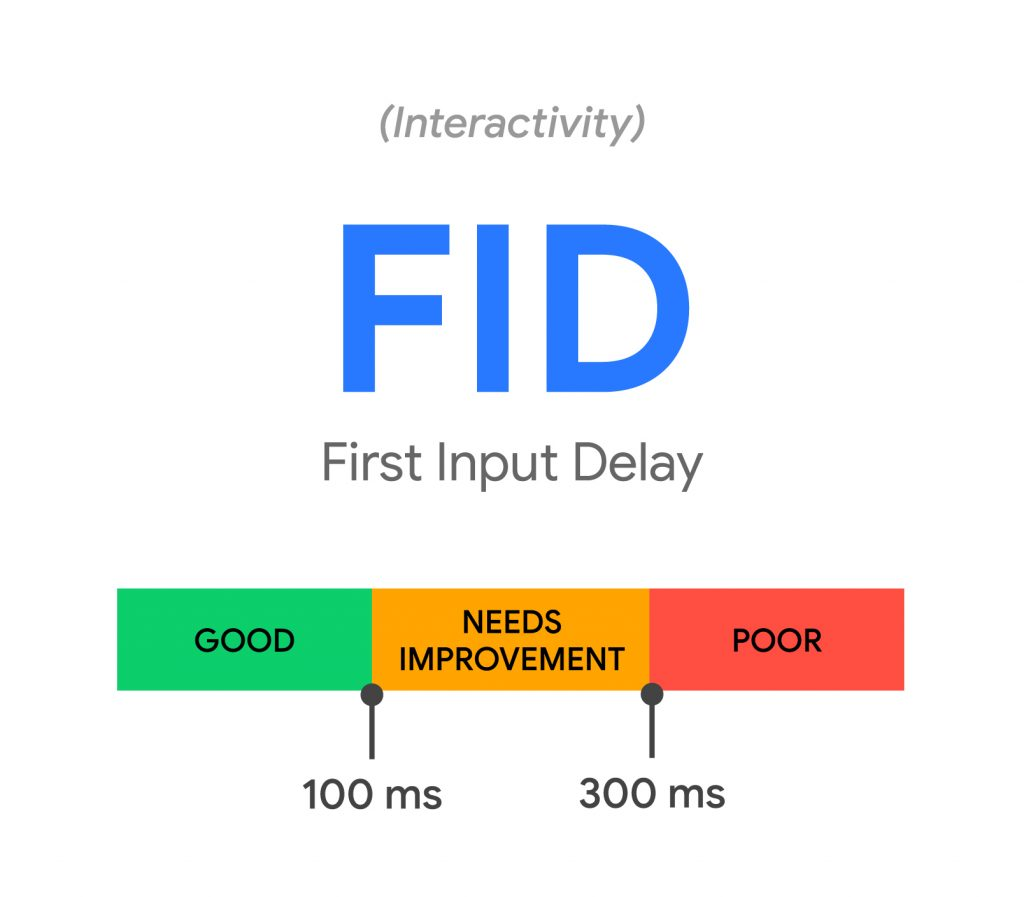

First Input Delay (FID)

Aim for a score of no more than 100 milliseconds.

If your score is higher than that, you might need to lower the workload on the main thread, the impact on third-party code, the execution time of JavaScript, the number of transfers, and the number of requests.

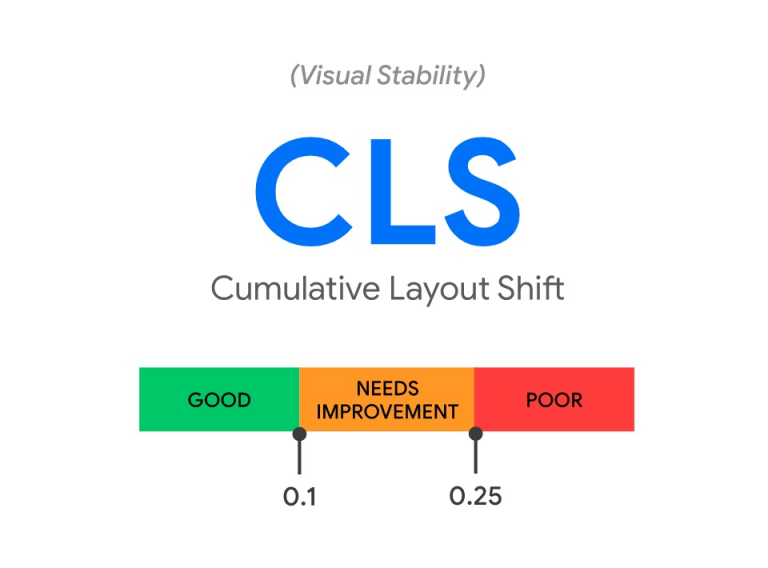

Cumulative Layout Shift (CLS)

Target a score of 0.1 or below

Include size characteristics on your visual and video material to prevent unpredictable layout adjustments if your score is higher (or reserve the space with CSS aspect ratio boxes). Keep your content from crossing over, and take care when animating any transitions.

Blockers for slow pages

Your website’s load time may be impacted by several variables. However, if you’re short on time and would want to start by concentrating on the primary offenders, pay particular attention to the following elements:

- Unused JavaScript and CSS Code.

- Render blocking JavaScript and CSS Code.

- unminified JavaScript and CSS Code.

- Large image file sizes (more on that below).

- Too many redirect chains.

Consider preloading JavaScript and CSS files to increase their loading speed.

Enabling early hints is an additional choice that instructs the browser in the HTTP server response which resources it should begin downloading to take advantage of “server think-time” and accelerates page load times.

If your score is higher than that, you might need to lower the workload on the main thread, the impact on third-party code, the execution time of JavaScript, the number of transfers, and the number of requests.

To test your website:

Navigate to https://pagespeed.web.dev/

Enter the URL of the page you want to scan.

I’d suggest starting with your homepage.

WebPageTest.org is another useful resource that displays your Core Web Vitals in addition to additional metrics that may greatly enhance the functionality and health of your website. It’s also free!

Simply put a page URL into the site’s search box to launch a comprehensive test from the default location. Additionally, you may sign up as a user and select from a list of locations to test your website across various nations, devices, and browsers.

WebPageTest will provide you with a Performance Summary that is divided into four main sections: Opportunities and Experiments, Observed Metrics, Real User Measurements, and Individual Runs. This Performance Summary will show you exactly where your website stands in terms of performance and what might be slowing it down.

Design Elements

We often delegate concerns about website performance and health monitoring to the tech staff. But what if I told you that your website’s design and the components you use may determine how well it performs? It’s time to include the design team.

Although images are excellent, if they are not scaled correctly, they may cause your website to load slowly. Resize your photographs before posting them, and don’t upload huge files if they won’t be displayed in full.

Image Optimization

Similarly, instead of using bulkier JPEG or PNG files, compress your photos and experiment with various file formats like WebP, JPEG 2000, and JPEG XR. To guarantee that pictures load as the user views them rather than all at once, think about using native lazy loading.

The loading=”lazy” tag on img> or iframe> tells the browser to load them when the user scrolls closer to them, and almost all browsers, including Chrome, Safari, and Firefox, support it. Avoid lazily loading above-the-fold pictures as this will lower your page’s LCP score. Google suggests using the fetchpriority=”high” tag on images above the fold to raise LCP.

You don’t need to preload the pictures if you utilize that property. Images above the fold should either be preloaded or assigned to the “fetch priority” attribute. Additionally, use the “srcset” attribute’s responsiveness to load pictures that are appropriately scaled for the screen size and prevent loading redundantly big images on tiny displays. This will significantly raise the LCP score.

Fonts

Although customers with 20/20 vision may find it difficult to read extravagant bespoke fonts, they can also significantly slow down your website. Replace fonts that are hosted externally with more web-safe fonts, and consider using Google fonts – provided that they are hosted through Google’s CDN.

Moreover, since this font standard may drastically lower font file sizes, think about implementing changeable fonts into the overall design of your website. Preload your fonts, please.

Animations and auxiliary features

It goes without saying: Don’t overdo it with the sliders, animations, films, special effects, etc. A few dynamic components here and there are good, but overloading your website with moving graphics may be annoying for both visitors and servers.

Avoid using non-composited animations since they need the page to be painted again, which adds to the main thread’s workload and makes the website look visually unsteady as it loads.

PWA solution for mobile optimization

Why not go all out and create a Progressive Web App (PWA) from your mobile site? PWAs load cached material faster since they are designed with service workers. Additionally, PWAs are similar to native mobile apps, which are excellent for performance and user experience.

Technical Performance Metrics Additional Uptime

Your website’s uptime demonstrates how well it is operating. Your website’s user experience, Google rankings, and ultimately your income will suffer if it often crashes or goes down. Aim for a 99.999% uptime if at all feasible, and test your website from many locations.

Monitoring uptime instruments:

- StatusCake.

- Pingdom.

- Better Uptime.

- UptimeRobot.

Data Base Performer

Your website may be responding slowly because of poor database performance if you’ve examined the fundamentals yet nothing seems to be working.

This may be verified by keeping track of how quickly your queries are responding and identifying the particular database queries that are taking the longest.

When you’ve finished, start optimizing! You may find solutions based on precise data and rapidly identify bottlenecks by using technologies like Blackfire.io.

Web Server’s Hardware

If your disc space is overflowing with log files, photos, videos, and database entries, your website can slow down. Maintain frequent CPU load monitoring, particularly after implementing updates or making design modifications. By effectively monitoring and debugging your whole stack, tools like New Relic may assist you.

Search Visibility

Many of the metrics that were previously addressed have already had a big influence on search visibility. Therefore, by optimizing your website pages with Google PageSpeed Insights, you are also taking vital steps to improve your SEO. Alternatively, you may choose a website crawling tool that best meets your needs, such as Semrush, or Sitechecker.pro, Screaming Frog, or DeepCrawl.

Website crawlers help find all types of issues, such as:

- Broken links.

- Broken images.

- Monitor core web vital metrics.

- Redirect chains.

- Structured data errors.

- Noindexed pages.

- Missing headings and Meta descriptions.

- Mixed content.

Make sure you’re prepared for the following issues:

XML sitemap: Verify that your sitemap is constructed appropriately and determine whether any adjustments or a new submission of your sitemap through Google Search Console are required.

Robots.txt: Use a robots.txt file to better regulate crawl traffic on your web pages (HTML, PDF, or any other non-media formats that search engines can read), especially if you worry that Google’s crawler may be overtaxing your server.

Website Caching and Security

Purchase an SSL certificate!

A secure website is in good health. Even if your website runs flawlessly and has a perfect score, visitors (or search engines) won’t trust it if the address doesn’t begin with https://. An SSL certificate is simply a piece of code that runs on your server and creates an encrypted connection, ensuring the security of user data.

Although obtaining an SSL certificate is not very challenging, the manual process might take a while. However, if you are working with a reputable hosting company likes BlueHost, your provider will almost certainly be able to give a free SSL for your name.

Think About Using A CDN

Distributed computers working together to provide material quickly over the internet is called content delivery networks (CDNs).In other words, a CDN is a solution that, independent of a user’s location, accelerates the performance of your website by maintaining its content on servers close to that user. Caching is another name for this.

A CDN is essential if you have a global presence! By spreading out traffic and increasing security with features like DDoS mitigation, it will speed up page load time, save bandwidth costs, spread out traffic (cutting the likelihood of your site going down), and boost traffic.

Leading companies in the sector include Google Cloud CDN, Amazon Cloudfront, and Cloudflare. To choose the finest CDN for your website and company needs, conduct your homework since there are many alternative solutions available.

Configure a Web Application Firewall

By excluding questionable HTTP traffic, a Web Application Firewall (WAF) safeguards web applications. It aims to shield apps against assaults like:

- Cross-site forgery.

- Cross-site-scripting (XSS).

- File inclusion.

- SQL injection.

Below is a list of the most popular and trusted web application firewalls:

- Cloudflare WAF.

- GoDaddy Firewall.

- Microsoft Azure.

And for WordPress, you can consider installing plugins such as:

- WordFence.

- Sucuri.

- All-In-One Security (AIOS).

Verdict

It’s all done now! As you can see, several factors affect the functionality and health of websites. Checking your Core Web Vitals to identify where you can make improvements should be your first logical move if your website is running slowly. Additionally, you may examine additional technical metrics and make improvements to them.

The health of your website depends on SEO as well, so look at your links, search visibility, and potential load blocks to determine where you can make improvements. Don’t forget to use caching and your SSL certificate as well. Your website’s design can also affect how quickly and effectively it loads, especially if you or your designers like to use heavy design components. Keep in mind to optimize your photos, stay away from dense typefaces, and utilize animations sparingly.

AMP: Is It A Google Ranking Factor?

Although AMP can improve other ranking variables like speed, is it a factor in and of itself? An agenda for HTML called AMP. It enables desktop-optimized websites to generate lightning-fast mobile versions of their web pages.

AMP was developed by Google, which has led to assertions that it offers pages a ranking edge over non-AMP pages in mobile searches. When you consider it, AMP checks out many boxes that indicate they may be used as ranking factors:

- Created by Google

- Improves the mobile-friendliness of websites

- Enhances page speed

Although Google aggressively promotes its use, it has refuted accusations that AMP affects rankings. It’s simple to claim that AMP doesn’t provide a site with a ranking boost and stop there. Here are the findings from the evidence about AMP’s influence on search results and its relationship to other ranking variables.

The Arguments against AMP as A Ranking Element

This one is rather simple because Google has said that AMP does not affect rankings. Google claims that all pages are ranked using the same signals in its Advanced SEO guide:

Although speed is a ranking criterion for Google Search, AMP itself is not. No of the technology used to create the website, Google Search holds all pages to the same standard. This comment refers to what we said before about how AMP affects other things, such as page speed, which is a known ranking component.

These extra indications may be useful for AMP-using websites. Page speed has been a ranking factor for mobile searches since July 2018. AMP helps websites convey higher ranking signals about mobile page performance since it rapidly loads pages. Better ranks may result from faster processing. Sites can still provide the same signals without AMP, though.

Core Web Vitals

With the release of the Page Experience upgrade in June 2021, Google’s Core Web Vitals were transformed into ranking criteria.

Google’s message to website owners before the update’s release has always been that AMP may aid in obtaining the best Core Web Vitals ratings.

It is revealed by Google that AMP domains had a five-fold higher pass rate for Core Web Vitals than non-AMP domains. The search rankings of a website can be enhanced by Google’s Core Web Vitals standards.

Reduction in AMP

In the past, AMP had several benefits that might improve how a page appears in search results. When browsing for news items, for instance, Google’s Top Stories carousel, which shows at the top of search results, used to only allow AMP pages.

For a long, Top Stories qualifying was a ranking benefit exclusive to AMP.

With the release of the Page Experience upgrade in June 2021, this situation changed because non-AMP pages can now display in the Top Stories carousel.

Our Verdict: AMP Is Not a Ranking Factor

Google has often said that AMP is not influenced search engine rankings. Additionally, it no longer offers distinguishing advantages that can affect click-through rates, such as a distinctive symbol and exclusivity for Top Stories. AMP is not a ranking element in and of itself, but it can have a favorable influence on other ranking variables (like speed). If you want to get information about Title Tags Are Replaced with Site Names by Google for Homepage Results visit our latest blog.

Title Tags Are Replaced with Site Names by Google for Homepage Results

Google currently only displays the site names in mobile search results for whole websites, such as the home page. Google appears to have ceased showing title tags in mobile search results for the whole website, such as in inquiries for a website’s name, which generally display the home page. This functionality does not work for subdomains. Only a website’s generic name is shown in smartphone searches.

As an example, the generic name of the website, Search Engine Land, is displayed on the search engine results page (SERP) for Search Engine Land on a mobile device.

The Title of The Home Page is:

For keyword searches that are not branded, the title tags seem to still be visible. The title tags appear to be displayed when a brand name and associated keywords are searched.

Why Do Google Searches Use Site Names?

Google employs site names to make it easier for users to recognize a certain website in the search results. In addition to English, French, Japanese, and German, this new functionality will be made available in other languages over the coming several months.

Ineffectiveness of New Features

When looking for a compound word domain name like “Search Engine Land” and “searchengineland,” the same search results that included the new site names as the title link are displayed. However, a search using the domain HubSpot returns the old version of the search results with the title tag. A search for Hub Spot that includes a space between the two terms returns the name of the website.

A New Site Names Feature for Structured Data

Google recommends using the WebSite’s structured data type. Because Google already understood that a website was a website and didn’t need structured data to know that it was indexing a website, it was previously believed that the WebSite structured data site had no function.

This has changed because Google now uses the “name” field of the WebSite structured data type, in particular, to identify the site name of a website.

How Does It Affect Sites With Different Names?

The value of the website’s structured data comes from the ability to tell Google the website’s other name.

The following is how the structured data for the optional name is presented:

More Than Structured Data is used by Google

In addition to structured data, Google also takes into account on-page, off-page, and Metadata information when deciding what a web page’s site name is, as stated in the Google site name rules.

Using the following criteria, Google analyses the domain name:

- Structured data on the website

- Headings (H1, H2, etc) (H1, H2, etc.)

- Metadata from the Open Graph Protocol, notably the og: site name

Names of Google Sites

The new Google search feature that shows site names on mobile devices is attractive. There should be less clutter in the SERPs for brand name searches on the main page. Even yet, we might envision some individuals complaining about the title tag’s ineffectiveness in these searches.

Facebook is going To End Live Shopping on October 1

From October 1, 2022, Facebook will no longer let users hold live shopping events, according to a blog post by Meta. It will instead transfer its attention to Reels, Meta’s short-form video product that is accessible on both Facebook and Instagram, citing a shifting preference for short-form videos among users as the motivating cause.

To simplify online buying and provide shops of all stripes the tools they need to expand their companies, Facebook introduced live shopping in August 2020. It offered an interactive opportunity to communicate with viewers and sell products.

Ecommerce shops will still be allowed to use the Facebook Live tool, but they will not be able to tag or make playlists of their items. Retailers will thus need to find additional channels for selling goods on Facebook, such as buying display advertisements and developing collections.

Moving Highlights Increased Attention on Short Videos by Meta

Instagram users first reacted negatively to the video function, but it has grown in popularity. 11 of the top 20 Facebook postings in the fourth quarter of 2016 were short-form videos, according to the Integrity Institute, a social media research tank.

11 of the top 20 Facebook postings in the fourth quarter of 2021 were short-form videos, according to the Integrity Institute, a social media research group. As a result, businesses now have the chance to utilize videos to categorize items, provide calls to action, and interact with their target market. To facilitate more thorough exploration and selection, you can also tag goods in Reels on Instagram. By enabling sellers and producers to use sponsored adverts to promote their material, Meta has also boosted the possibility that Reels may bring in money for the business. If you are interested in other updates of meta and want to get engaged, you can explore Meta Announces First-Ever Drop in Ad Revenue at Waqar World.

Meta Announces First-Ever Drop in Ad Revenue

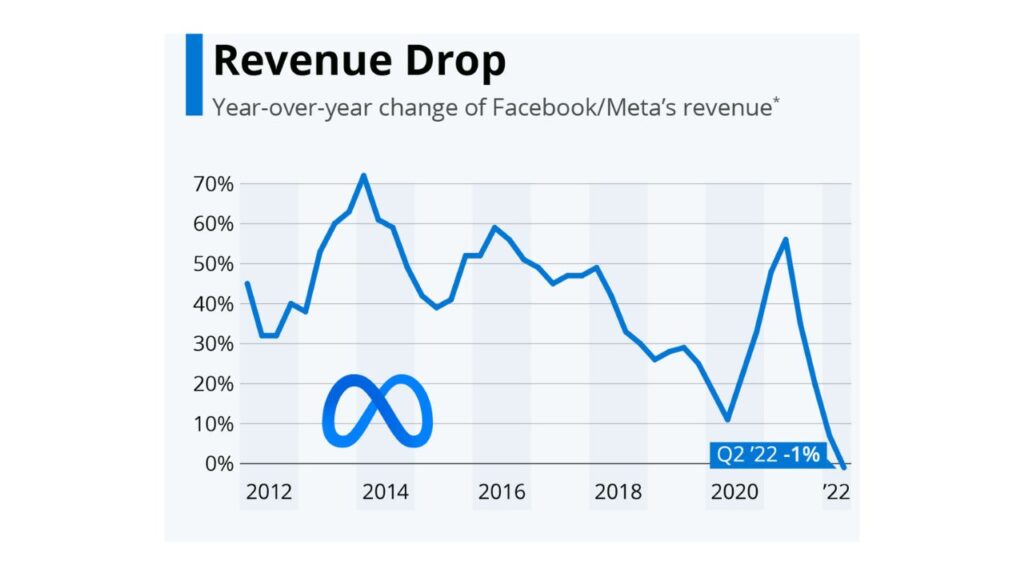

In its Q2 2022 financial report, Facebook parent company Meta discloses its first-ever year-over-year loss in advertising income. The company’s first-ever year-over-year loss in advertising income is revealed in Meta’s most recent financial report, indicating a declining trend that it expects to continue.

The ten-year run of ad revenue growth for Meta comes to an end in the Q2 2022 financial release. In light of these numbers, Waqar World will discuss why this is important, what it implies for marketers, and what Meta will do going forward.

Meta’s Revenue Drop Is Linked To the Economy

The enormous decline in Facebook’s revenue is the result of several issues. Mark Zuckerberg, the CEO of Meta, claims on an investor conference call that a general economic downturn is to blame for his firm failing to meet expectations. Let’s go through what he said:

“It appears that the economy has entered a slump, which will have a significant influence on the digital advertising industry. Although it’s always difficult to gauge how long or how deep these cycles will last, I’d argue that the current state of affairs is worse than it was a quarter ago”.

Problems for Meta

Along with a sluggish economy, Apple’s privacy settings are a problem for Meta. The decline in revenue growth that began when Apple introduced the option for customers to ask applications not to follow their data is only being accelerated by the current economic climate. Because Meta doesn’t have access to as much information about users, individuals receive less relevant advertising in their feeds as a result.

The advertising division at Meta is in even worse shape as a result, and Zuckerberg is warning investors to anticipate further revenue declines in the coming quarter. Not everything is horrible, though. We’ll go through additional report highlights in the part after this.

What Numbers Are There?

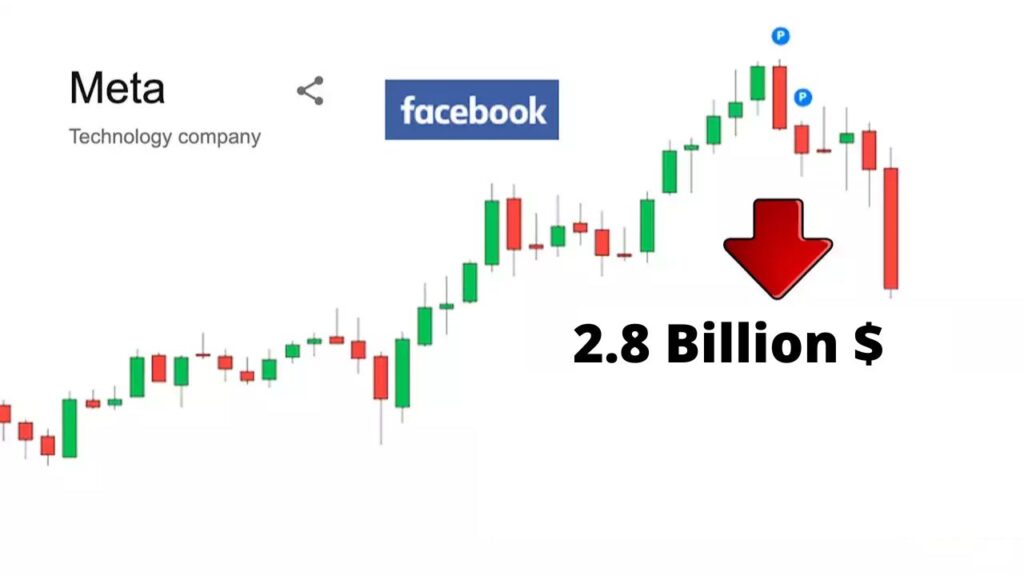

Comparing Q2 2022 to Q2 2021, Meta’s ad income decreased by 1%. Despite expecting to make $28.94 billion through advertising, Meta made 28.82 billion. It costs a lot of money to run Zuckerberg’s Reality Labs division, which is responsible for the Metaverse project. In Q2, project-related expenses cost Meta $2.8 billion.

Facebook’s daily active users are up 3%, which is a promising development. There are about 1.97 billion daily logins. Facebook, Instagram, Messenger, and WhatsApp all had a 4% increase in daily active users over the previous year.

There are no signs that consumers are abandoning Meta’s collection of applications, which suggests that the firm has a chance to increase income if it can figure out how to make advertisements more efficient. We’ll discuss what this means for companies and marketers that regularly use Meta’s applications in our following piece.

Why Does It Matter?

No in any way, the popularity of Meta’s apps is diminishing. There is an audience. The issue is that because of their reduced spending, advertisers aren’t obtaining the same return on investment from their advertising as they formerly did.

Meta intends to provide new forms of monetization to address the issue of diminishing ad income. More precisely, the business is figuring out how to profit from Reels. Zuckerberg emphasizes his commitment to constructing Facebook and Instagram around Reels in reaction to the Q2 financial announcement.

One of the only areas of Facebook and Instagram that isn’t entirely monetized is the Reels viewer. So while it doesn’t now produce income, it may do so in the future. Meta wants to resemble TikTok more. Other sorts of content will ultimately receive less attention as Meta places more emphasis on reels. To keep exposure to Meta’s applications, companies and marketers should think about how to mix in the short-form video. Reels could be a good option to increase your reach if you’re not seeing the results you want from Facebook advertisements in this regard. If you want to get updates about Googlebot Updates, you can visit our blog “Googlebot Updates

The Name of Google’s New Programming Language Is Carbon

Carbon is a brand-new programming language created by Google engineers. It is a language that is open to experimentation and might replace the C++ programming language. On July 19, 2022, in Toronto, during the CPP North conference, Google developer Chandler Carruth introduced the language.

Since Rust is still in its very early stages of development and is exceedingly difficult for consumers to understand, C++ presently lacks a replacement language. Thus, it is challenging to refer to Rust as a successor. Everyone is aware of Google’s fixation on releasing new programming languages and developing new frameworks. Dart is the web-based programming language that Google introduced as one of their programming languages.

The popularity of the programming language GO among developers is pretty astounding. GO or GoLang was explicitly written and statically typed. It was an all-purpose programming language, much like the C computer language.

Currently, Google is getting ready to introduce a new programming language called Carbon. A new programming language called Carbon is now about to be released by Google.

Key characteristics of carbon:

- Checked definitions for the contemporary generic system are provided by Carbon.

- Code that is simple to develop and read will receive most of Carbon’s attention.

- In terms of development, the language will also be quick and flexible.

- All hardware architectures, environments, and operating systems are supported by the language.

Launch of the Carbon programming language

A recent event called CPP North Event 2022 brought together several people to talk about potential C++ advances in the future. As a result, Chandler Carruth, a Googler, unveiled Carbon, a new programming language.

A possible replacement for the C++ programming language, the carbon programming language was introduced as an experimental language. The technical head for Google’s programming language, Chandler Carruth, also informed us that they will begin this exploratory work with the C++ community.

Currut intends to construct Carbon in a setting that is more democratic and community-driven. The project will receive GitHub assistance and Discord discussion.

Despite the fact that Google first funded Carbon, the development team aims to limit donations from Google and other specific companies to less than 50% by the end of the year. In the end, they intend to hand the project over to an independent software foundation, whose volunteers will oversee its progress.

By the end of the year, the primary functioning version (“0.1”) will be released, according to the creators. Modern programming practices will be used to build Carbon, including a shared framework that does not require code to be checked and rechecked for each instance.

Build up

As is well known, C++ is C’s successor. The JavaScript successor is TypeScript. The replacement for Objective C is Swift. Java’s successor is kotlin. But who is C++’s replacement?

Has it rusted? Yes, Rust is essentially the replacement for C++, however, it is still in its very early stages of development and is quite challenging for consumers to understand. Therefore, it is premature to refer to Rust as C++’s successor and it will be challenging for Rust to replace such a robust language.

Carbon Programming Language’s objectives

Carbon could be the next step in the evolution of software and computer languages. Code that is simple to develop and read will receive most of Carbon’s attention. Additionally, it will feature an enhanced testing system for complex sorts of code that is realistically safe.

Last but not least, we are interested in seeing how C++’s replacement will address its shortcomings. The event also referred to its C++ and Golang underpinnings. It is only in the experimental stage right now, but soon it will enter the beta stage and be released. On its official GitHub page, Carbon is an open-source project where you may learn more and participate.